When AI Becomes the Source of Truth: Rethinking Transcript‑Free Meeting Summaries

You will soon be able to get AI-generated meeting summaries in Microsoft Teams without a recording or transcript.

This feature is announced as serving the needs of certain organizations' compliance policies. But are we just trading one area of questionable compliance for another? After all, following responsible practices should be a baseline expectation when using AI.

One of the important tenets we have established in business-related AI use over the last few years is that the output should be attributable back to a source. This is one of the important framings of the new multi-model Critique mode that's recently been added to Researcher.

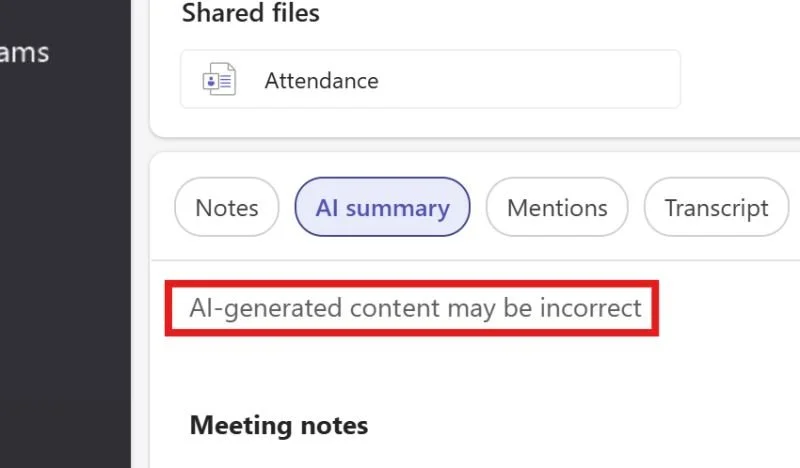

A set of AI-generated meeting notes that have no associated transcript suddenly turn the AI model's understanding into the source of truth. And while Copilot's work in Teams is generally fairly accurate, it isn't perfect. I'm interested to learn in what circumstances an accurate transcript is more of a liability than an inaccurate set of AI-generated notes. Currently, every AI meeting summary comes with a warning label from Microsoft stating it might be wrong.

I think there is an opportunity here for us to think deeply about whether our goal should be to bend AI technology to old ways of thinking about work, or whether the opportunity of AI should update our practices, regulations, and laws. Bolting on AI in a way that introduces risk in order to be compliant is the worst path for everyone: users get risky outputs, and the AI vendors get the blame.

What do you think? Do you have a clear use case for transcript-free AI meeting summaries that still feel compliant with responsible AI practices?

First posted on Linkedin on 04/15/2026 → View Linkedin Post Here